Regularization

Regularization

Attention Dropout

Attention Dropout is a type of dropout used in attention-based architectures, where elements are randomly dropped out of the softmax in the attention equation. For example, for scaled-dot product attention, we would drop elements from the first term:

$$ {\text{Attention}}(Q, K, V) = \text{softmax}\left(\frac{QK^{T}}{\sqrt{d_k}}\right)V $$

Papers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Retrieval | 77 | 9.41% |

| Language Modelling | 69 | 8.44% |

| Question Answering | 46 | 5.62% |

| Large Language Model | 40 | 4.89% |

| Sentence | 26 | 3.18% |

| In-Context Learning | 23 | 2.81% |

| Text Generation | 23 | 2.81% |

| Code Generation | 18 | 2.20% |

| Information Retrieval | 17 | 2.08% |

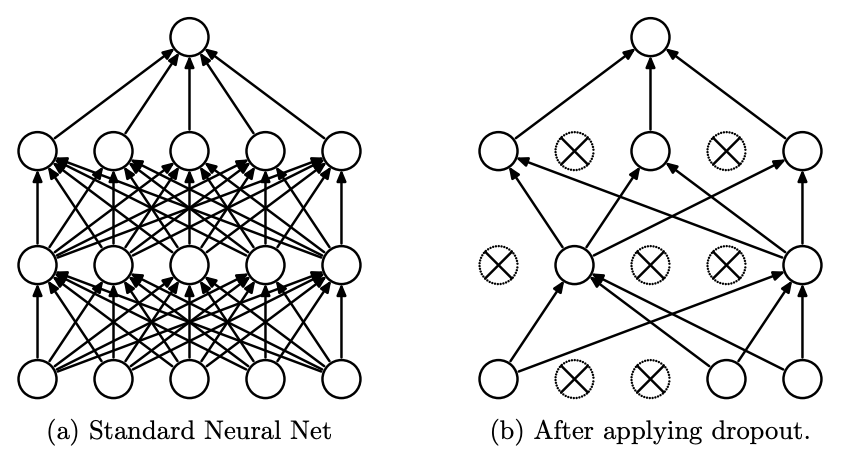

Dropout

Dropout